Introduction

This post is derived from the paper FoundationDB: A Distributed Unbundled Transactional Key Value Store, Zhou, et al., 2021. I came across this paper while perusing the list of papers in SIGMOD/PODS 2021 and it piqued my curiosity instantly.

FoundationDB is an open source key-value store and one of the first systems to combine the flexibility and scalability of NoSQL architectures with the power of ACID transactions.

Conventional NoSQL systems typically provide scalability by sacrificing transactional semantics and instead provide eventual consistency. FoundationDB, however, offers strictly serializable transactions while scaling to large workloads. Similar to NoSQL systems, it provides no structured semantics, no query language, data model or schema management leaving application developers with great flexibility.

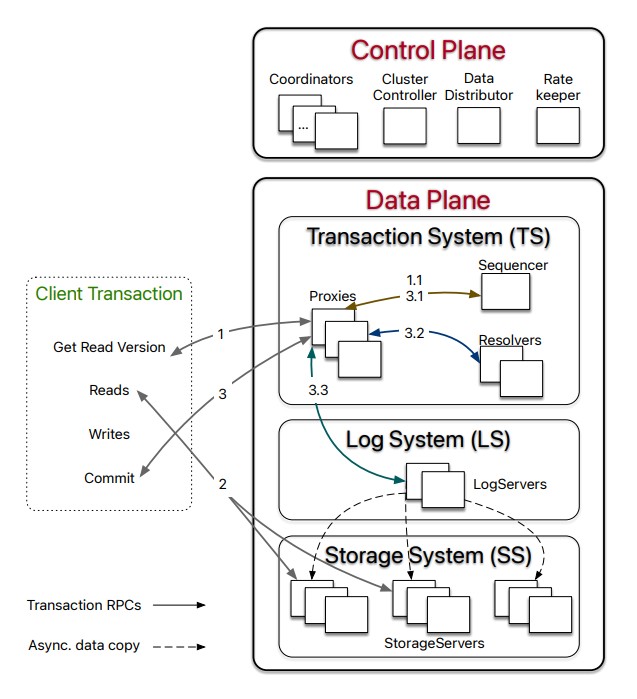

FDB adopts an unbundled architecture (which means that the transactional component is decoupled from the data storage component) that comprises a control plane and a data plane. The control plane manages metadata of the cluster and uses Active Disk Paxos for high availability. The data plane consists of a transactional management system (TS), responsible for processing updates, a distributed storage system (SS) serving reads, and a log system (LS) that stores Write-Ahead-Log for TS, each of which can be independently scaled out. FDB achieves strict serializability through a combination of optimistic concurrency control (OCC) and multi-version concurrency control (MVCC).

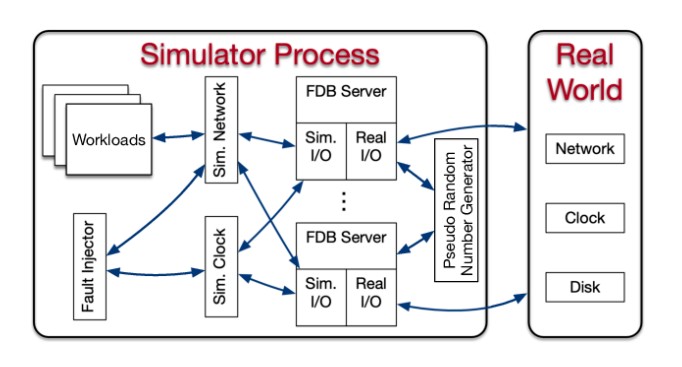

Another unique feature of FDB is its integrated deterministic simulation framework that can simulate a network of interacting processes and myriad disk, process, network and request-level failures and recoveries, thus, enabling rigorous testing.

Design Principles

The main design principles of FDB are as follows. These are covered in more detail in subsequent sections.

Divide-and-Conquer

Also called separation of concerns, this is probably the most important design principle of FDB. FDB decouples the transaction management system (write path) from the distributed storage (read path) and scales them independently. FDB further separates responsibilities in each sub-system by assigning roles to different processes, representing different aspects of transaction management like timestamp management, accepting commits, conflict detection and logging.

Make failure a common case

FDB handles all failures through the recovery path.

instead of fixing all possible failure scenarios, the transaction system proactively shuts down when it detects a failure

This means that all failure handling is done by a single recovery operation (in FDB, the recovery is designed to be fast), which simplifies normal transaction processing.

Fail fast and recover fast

FDB minimizes Mean-Time-To-Recovery, which is essential because failure handling depends on the recovery path.

Simulation testing

FDB relies on a randomized, deterministic simulation framework for testing the correctness of its distributed database.

Architecture

An FDB cluster has a control plane for managing critical system metadata and cluster-wide orchestration, and a data plane for transaction processing and data storage.

Control Plane

The control plane manages the configuration of transaction systems and consists of Coordinators that form a disk Paxos group. Coordinators elect a singleton ClusterController that is responsible for monitoring all servers in the cluster. The ClusterController recruits three singleton processes:

DataDistributorthat is responsible for monitoring failures and balancing data amongStorageServersRateKeeperthat provides overload protection for the clusterSequencerthat assigns read and commit versions to transactions. TheSequenceris part of the transaction management system in the Data Plane

If these singletons fail or crash, the ClusterCoordinator re-recruits them.

Data Plane

Data Plane is where the “unbundled” nature of FDB is exhibited. The Data Plane consists of:

- a distributed transaction management system (TS) that performs in-memory transaction processing

- a log system (LS) that stores Write-Ahead-Log for TS

- a distributed storage system (SS) that stores data and services reads

According to the authors, this architecture scales well for OLTP workloads that are read-mostly, read and write a small set of keys, have low contention and require scalability.

The TS provides transaction processing and consists of stateless processes: a Sequencer, Proxies and Resolvers. The Sequencer assigns read version and commit version to each transaction and recruits Proxies, Resolvers and LogServers. Proxies offer MVCC read versions to clients and orchestrate transaction commits. Resolvers check for conflicts between transactions.

The LS contains a set of LogServers (recruited by Sequencer). Each LogServer stores Write-Ahead-Log data for some StorageServers. In this way, LogServers act as replicated, distributed, sharded persistent queues.

The SS contains a set of StorageServers that serve client reads and store data. Each StorageServer stores a set of data shards comprising of contiguous key ranges. In this way, they form a distributed B-tree.

Scaling

The database scales by increasing the number of processes for each role: Proxies, Resolvers, LogServers, StorageServers. Reads and writes are decoupled. Because clients directly issue reads to sharded SorageServers, reads scale linearly with the number of StorageServers. Writes are scaled by adding more processes to Proxies, Resolvers and LogServers. Thus, there is a clear separation of scaling of client reads from client writes.

Lifecycle of a Transaction

To start a transaction, a client contacts one of the Proxies to obtain a read version. The Proxy obtains a read version from the Sequencer that is guaranteed to be no less than any previously issued transaction commit version. This read version is sent back to the client after which the client may issue multiple reads against the StorageServers at that specific read version. Client writes are buffered locally at the client. To commit the transaction, the client sends the transaction data, which includes the read and written key ranges, to one of the Proxies.

A Proxy commits a transaction in three steps:

- The

Proxycontacts theSequencerto obtain a commit version that is larger than any existing read versions or commit versions.

The

Sequencerchooses the commit version by advancing it at a rate of one million versions per second

The

Proxysends the transaction information toResolvers.Resolversare range-partitioned meaning that eachResolveris responsible for one or more key ranges.Resolverscheck for read-write conflicts, thus implementing optimistic concurrency control (OCC). TheProxymarks the transaction aborted if any of theResolversdetects a conflict. Clients can then choose to restart the transaction from the beginning.If there are no conflicts found, the transaction is sent to a set of

LogServersfor persistence. A transaction is marked as committed after all designatedLogServersreply to theProxy, which then finally, reports the committed version to theSequencer. TheSequenceris, thus, able to ensure that later transactions’ read versions are after this commit version.The

Proxyreplies to the client reporting a successful transaction.StorageServersasynchronously pull mutation logs from theLogServersand apply committed updates to disks.

In contrast to a read-write transaction described above, a read-only transaction is simpler. Clients are provided a read version at which all reads subsequently occur. Clients can commit these transactions locally without contacting the database.

A read-only transaction in FDB is both serializable (happens at the read version) and performant (thanks to the MVCC), and the client can commit these transactions locally without contacting the database. This is particularly important because the majority of transactions are read-only.

Supporting Strict Serializability

FDB combines OCC with MVCC to provide Serializable Snapshot Isolation.

As described above, a transaction receives both its read version and commit version from the Sequencer (via the Proxy). The read version is guaranteed to be no less than any committed version when the transaction starts. The commit version is larger than any existing read and commit versions. The commit version, hence, defines a serial history for transactions and serves as a Log Sequence Number (LSN).

Because [transaction] 𝑇𝑥 observes the results of all previous committed transactions, FDB achieves strict serializability. To ensure there is no gaps between LSNs, the

Sequencerreturns the previous commit version (i.e., previous LSN) with commit version. AProxysends both LSN and previous LSN toResolversandLogServersso that they can serially process transactions in the order of LSNs. Similarly,StorageServerspull log data fromLogServersin increasing LSNs as well.

Note that a transaction commits only when all Resolvers admit the transaction. A transaction may be admitted by some Resolvers but rejected by other Resolvers (because conflict resolution happens on a set of Resolvers in parallel). This may cause subsequent transactions to conflict and fail. The authors point out that that this has not been an issue for their production workloads because a transaction’s key range usually falls into one Resolver. Also, in the case that this issue does arise, the conflicts are limited to only happen within the MVCC time window (set to 5 seconds) because the modified keys expire after this time window.

As can be seen, the advantage of this OCC design is that there are no locks involved in conflict detection, thus simplifying interactions between the TS and SS. The limitations, on the other hand, are that the recent commit history needs to be kept in the Resolvers (to implement the lock-free conflict detection) and the inability to guarantee that all transactions will commit.

Recovery and Reconfiguration

In FDB, recovery is intentionally made very cheap - there is no checkpoint and no need to apply redo or undo log during recovery. The Sequencer drives recovery by first reading the previous transaction system states from Coordinators including the information about old (or existing) LogServers. The Sequencer then stops these LogServers from accepting new transactions and recruits new Proxies, Resolvers and LogServers. Proxies and Resolvers are stateless and hence, incur no extra work. However, for recovering LogServers, the system needs to ensure that all previously committed transactions are durable and retrievable by StorageServers.

The essence of the recovery of old LogServers is to determine the end of redo log, i.e., a Recovery Version (RV). Rolling back undo log is essentially discarding any data after RV in the old LogServers and StorageServers.

A Proxy sends the known committed version as part of requests to LogServers, which is the maximum LSN that this Proxy has committed. Each LogServer keeps track of the maximum KCV received and a Durable Version (DV) which is the maximum persisted LSN and responds with the DV and KCV that it knows about, during recovery when the Sequencer attempts to stop all LogServers. Once the Sequencer receives responses from a certain number of LogServers (the exact number depends on the replication factor and the fault tolerance settings), the Sequencer can identify the RV. When Sequencer accepts new transactions, the first is a special recovery transaction that informs the StorageServers to roll back any data larger than RV.

As is evident, recovery is driven by the Sequencer. The Sequencer follows the same steps (as recovery) when it starts up fresh. Hence, the simplest approach to handling a failure in TS or LS, or a database configuration change, is to terminate the Sequencer and let the ClusterController recruit a new Sequencer. The new Sequencer follows the above steps to spawn a new TS and LS instance, thus dividing the transaction processing into epochs where each epoch represents a generation of the transaction management system with its unique Sequencer process.

Simulation Testing

Simulation testing has enabled FDB to maintain a very high development velocity with a small team by shortening the latency between a bug being introduced and a bug being found, and by allowing deterministic reproduction of issues.

We know that distributed systems are hard to get right. A primary reason is that testing and debugging distributed systems is challenging.

The authors of FoundationDB adopted an ambitious approach of building a deterministic, discrete-event simulation environment capable of running the real database software with randomized synthetic workloads and fault injection. FDB itself was written such that it is amenable to this testing approach.

The simulator process of FDB abstracts all sources of non-determinism and communication like network, disk, time and pseudo-random number generators. The simulator process is capable of spawning multiple FDB servers that communicate with each other through a simulated network in a single discrete event simulation. Both the simulator and FDB are written in Flow, which is a syntactic extension of C++ allowing the Actor programming model.

The simulator runs multiple workloads like fault injection instructions, mock applications, database configuration changes and internal database functionality invocations.

Most of the synthetic workloads verify the contracts and properties of the database. Fault injection workloads inject machine, rack and data-center level fail-stop failures and reboots, network faults, partitions, latency problems, abnormal disk behavior and randomized event times.

FDB itself cooperates with the simulation framework by enabling it to make rare events more common through a technique called “buggification”.

At many places in its code-base, the simulation is given the opportunity to inject some unusual (but not contract-breaking) behavior such as unnecessarily returning an error from an operation that usually succeeds, injecting a delay in an operation that is usually fast, choosing an unusual value for a tuning parameter, etc.

Simulation testing helps in finding bugs quickly. Discrete-event simulation can run arbitrarily faster than real-time as the simulator can fast-forward clock to the next event. Running many simulations in parallel also helps in finding bugs more quickly.

All that said, simulation falls short of reliably detecting performance issues and in testing third-party libraries or dependencies. Consequently, the authors have largely avoided taking dependencies on external systems.

Summary

FoundationDB is a transactional key value store combining the flexibility of NoSQL systems and the guarantees of ACID transactions, which is designed for primarily OLTP workloads. The unbundled architecture of FDB that decouples transaction processing from logging and storage enables independent horizontal scaling of reads and writes. The transaction system combines OCC and MVCC to provide strict serializability of transactions.

FDB handles all failures through the recovery path. The decoupling of logging and determinism in transaction orders simplifies recovery by allowing redo and undo log processing to transpire asynchronously, permitting quick recovery.

Finally, FDB has built-in support for randomized but deterministic simulation testing that enables rapid development and testing and ensures the correctness of the database implementation.