Introduction

Zanzibar is a system developed at Google for storing permissions in the form of access control lists (ACL) and performing authorization checks based on the stored permissions. It is used by more than 1500 client services at Google, including Calendar, Cloud, Drive, Maps, Photos, and Youtube.

A unified authorization system like Zanzibar offers advantages as compared to maintaining separate access control mechanisms for individual applications.

- It helps establish consistent semantics and user experience across applications

- It makes it easier for applications to interoperate, for example, to coordinate access control when an object from one application embeds an object from another application

- Common infrastructure like a search index can be built on top of a unified access control system that works across applications

- It saves engineering resources by tackling challenges involved in building a scalable consistent authorization system once across applications

The salient features of Zanzibar are as follows:

Consistency: Zanzibar ensures that ACL checks respect the order in which users modify ACLs and object contents.

Flexibility: Zanzibar supports a rich set of access control policies by pairing a simple data model with a powerful configuration language.

Scalability: As a unified authorization system, Zanzibar is built to scale. It scales to trillions of ACLs and millions of authorization requests per second to support services with billions of users.

Low Latency: It maintains 95th-percentile latency of less than 10 ms. Since authorization checks are often in the critical path of user interactions, low latency responses are crucial.

High Availability: It has maintained availability of greater than 99.999% over 3 years of production use at Google. High availability is important because, in the absence of explicit authorizations, client services would be forced to deny their users access.

Data Model

In Zanzibar, ACLs are collections of object-user or object-object relations represented as relation tuples. Groups are simply ACLs with membership semantics.

Relation tuples are 3-tuples consisting of object, relation and userset, where values that relation can take are predefined in client configuration. userset can represent a user or a combination of object and relation, thus, allowing ACLs to refer to groups and this supports representing nested group membership.

Relation tuples are stored as rows in databases. Each namespace gets its own database, where namespace is predefined in client configuration.

Defining our data model around tuples, instead of per-object ACLs, allows us to unify the concepts of ACLs and groups and to support efficient reads and incremental updates

Consistency Model

Consistency in Zanzibar is designed primarily to thwart the New Enemy Problem. This problem occurs in authorization systems if the order of updates (and checks) to permissions and objects is not honored.

For example, consider a case where user Alice removes user Bob from the ACL of a document and then updates the document with content that must not be visible to Bob. Bob should not be able to see the restricted content but may do so if the ACL check neglects the ordering between removal and content update. That is, if the ACL check for Bob “sees” the document update before the permission update, Bob may have access to the new information.

A similar case occurs when ordering between updates to ACLs is not respected. If Alice removes Bob from the ACL of a folder and then moves new documents to the folder where document ACLs inherit from folder ACLs, Bob may be able to see the new documents if the ACL check for Bob neglects the ordering between the two ACL changes.

Preventing the “new enemy” problem requires Zanzibar to understand and respect the causal ordering between ACL or content updates, including updates on different ACLs or objects and those coordinated via channels invisible to Zanzibar. Hence Zanzibar must provide two key consistency properties: external consistency and snapshot reads with bounded staleness.

To provide external consistency and snapshot reads with bounded staleness, we store ACLs in the Spanner global database system. Spanner’s TrueTime mechanism assigns each ACL write a microsecond-resolution timestamp, such that the timestamps of writes reflect the causal ordering between writes, and thereby provide external consistency

Staleness bound for snapshot reads is made possible by the concept of zookie. A zookie is an opaque byte sequence encoding a globally meaningful timestamp that reflects an ACL write, a client content version, or a read snapshot. Clients can supply zookie in a request to ensure that the request is evaluated at a snapshot that is at least as fresh as the timestamp encoded in the zookie, thus bounding staleness.

API

Read

A read request to read relation tuples specifies one or more tuplesets and an optional zookie. Each tupleset specifies keys of a set of relation tuples. All tuplesets in a read request are processed at a single snapshot.

With the zookie, clients can request a read snapshot no earlier than a previous write if the zookie from the write response is given in the read request, or at the same snapshot as a previous read if the zookie from the earlier read response is given in the subsequent request. If the request doesn’t contain a zookie, Zanzibar will choose a reasonably recent snapshot, possibly offering a lower-latency response than if a zookie were provided.

Unlike the Expand API, read does not reflect userset rewrite rules. For example, if userset A always includes userset B, reading tuples with relation A will not return tuples with relation B. Clients that need to understand the effective userset use the Expand API.

Write

A write request may modify a single relation tuple to add, modify or remove an ACL. There are usually many relation tuples related to an object. Clients may modify all tuples related to an object through a read-modify-write process with optimistic concurrency control. This process uses a read RPC followed by a write RPC with a per-object “lock” tuple to detect write races.

Watch

A watch request specifies one or more namespaces and a zookie representing the time to start watching. A watch response contains all modification events in ascending timestamp order, from the requested timestamp to a timestamp encoded in a heartbeat zookie included in the watch response. The client can use the heartbeat zookie to resume watching where the previous watch response left off.

Check

A check request specifies a userset (represented by an object and a relation), a user (represented by an authentication token), and a cookie corresponding to the desired object version.

Content-change check

This is a special type of check request that is used to authorize application content modifications, contains no zookie, and hence, is evaluated at the latest snapshot. If a content change is authorized, the check response includes a zookie for clients to store along with object contents and use for subsequent checks on the same version.

Expand

An expand request specifies an object-relation pair and an optional zookie and returns the effective userset. Unlike the Read API, Expand follows indirect references expressed through userset rewrite rules. For example, if userset A always includes userset B, expanding tuples with the relation A will return tuples with the relation B too.

Expand is crucial for our clients to reason about the complete set of users and groups that have access to their objects, which allows them to build efficient search indices for access-controlled content.

Architecture

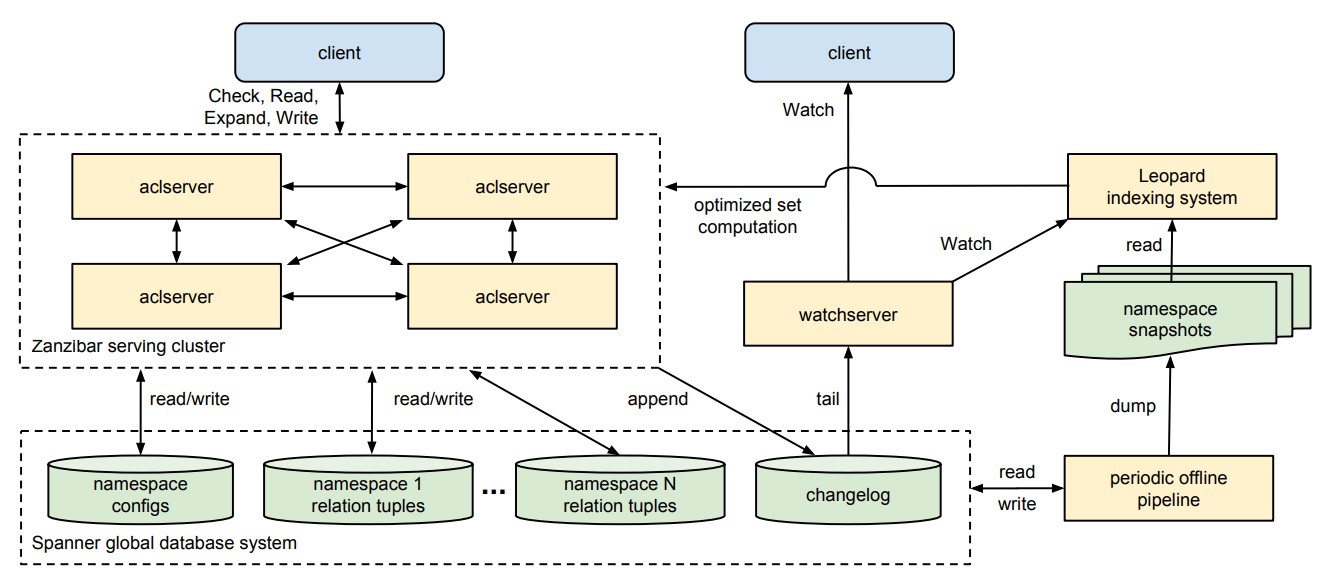

Zanzibar consists of the following major components:

a serving cluster consisting of

aclserversthat respond to Check, Read, Write and Expand requests. Requests arrive at any server in the cluster that that server fans out the work to other servers as necessary. Those servers may in turn contact other servers. The initial server gathers the final result and returns it to the clienta set of specialized server type called the

watchserversthat respond to Watch requests. These servers tail the changelog and serve a stream of namespace changes to clients in real-time.a collection of Spanner databases. There is one database for each client namespace to hold the relation tuples, one database for storing the changelog shared by all namespaces, and one database to hold all namespace configurations.

a data processing pipeline that runs periodic jobs to perform a variety of functions across all Zanzibar data in Spanner, like producing dumps of the relation tuples in each namespace at known snapshot timestamps or garbage-collecting tuple versions older than a threshold configured per namespace

an indexing system called Leopard, used to optimize operations on large and deeply nested sets. It reads periodic snapshots of ACL data and watches for changes between snapshots to keep its data current and performs transformations on the data (such as denormalization), and responds to requests from

aclservers.

Storage

Zanzibar stores relation tuples of each namespace in a separate database. Each row in these databases is a relation tuple. Multiple tuple versions are stored on different rows so that checks and reads at any timestamp within the garbage collection window can be performed.

The changelog database stores a history of tuple updated for the Watch API. Every Zanzibar write is committed to both the tuple storage and the changelog shard in a single transaction.

Namespace configs are stored in a database with two tables, one containing the configs keyed by namespace IDs and the other containing the changelog of config updates keyed by commit timestamps. The database design is chosen such that Zanzibar is able to load all configs upon startup and monitor the changelog to refresh configs continuously.

Evaluation Timestamp and Zookie

As mentioned before, clients can provide zookies to ensure a minimum snapshot timestamp for request evaluation. When a zookie is not provided, Zanzibar uses a default staleness chosen such that the evaluation timestamp is as recent as possible without impacting latency. Latency impact is kept at a minimum when choosing default staleness by minimizing cross-zone (cross-datacenter) round-trips.

On each read request it makes to Spanner, Zanzibar receives a hint about whether or not the data at that timestamp required an out-of-zone read and therefore incurred additional latency. Each server, thus, builds a frequency distribution of such out-of-zone reads for data at a default staleness and for fresher and staler data. This helps each server to compute the probability that any given piece of data at a particular timestamp is available locally. The default staleness is updated to be the lowest value that doesn’t incur an out-of-zone read.

Leopard Indexing system

Zanzibar evaluates ACL checks by converting check requests to boolean expressions. Since the data model allows userset rewriting rules (like nested group membership), evaluating such requests involves evaluating membership on all indirect ALCs or groups, recursively. This works well for most types of ACLs or groups but can be expensive when indirect ACLs or groups are deep or wide. For selected namespaces that exhibit such structure, Zanzibar handles checks using Leopard, a specialized index that supports efficient set computation.

Modeling group membership as a reachability problem in a graph, where nodes represent groups and users, and edges represent direct membership, Zanzibar flattens group-to-group paths to allow reachability to be efficiently evaluated by Leopard. Other types of denormalization are also applied as data patterns demand.

The Leopard system consists of three discrete parts:

- a serving system for consistent and low-latency operations across sets

- an offline, periodic index building system

- an online real-time layer capable of continuously updating the serving system as tuple changes occur

Index tuples are stored as ordered lists of integers in a structure such as skip lists, thus allowing efficient union and intersection among sets.

The offline index builder generates index shards from a snapshot of Zanzibar relation tuples and configs, and replicates the shards globally. It respects userset rewrite rules and recursively expands edges in an ACL graph to form Leopard index tuples.

The online real-time layer indexes all updates since the offline snapshot, where each update contains the timestamp of the update. Updates with timestamps less than or equal to the query timestamp are merged on top of the offline index during query processing. To maintain this layer, the Leopard indexer calls Zanzibar’s Watch API to receive temporally ordered Zanzibar tuple modifications and transforms them into temporally ordered Leopard tuple additions, updates and deletions.

Tail Latency Mitigation

Clients expect low latency responses from Zanzibar because authorization checks are often in the critical path of user interactions.

Zanzibar relies on request hedging to accommodate relatively slow tasks like calling Spanner and the Leopard index. Request hedging is the technique of sending the same request to multiple servers and using whichever response comes back first (and canceling the other requests).

To avoid unnecessarily multiplying load, the hedged requests aren’t sent until the initial request is known to be slow. Each server maintains a hedging delay estimator that dynamically computes an Nth percentile latency based on recent measurements. This limits the additional traffic incurred by hedging to a small fraction of total traffic.

Also, to reduce round-trip times, Zanzibar tries to place at least two replicas of these backend services in every geographical region that also contains Zanzibar servers.

Parting words

Zanzibar scales to trillions of access control rules and millions of authorization requests per second. It employs several techniques to provide scalability, low latency and high availability.

I haven’t covered all aspects of the Zanzibar system mentioned in the paper to keep this review short. Do check out the paper for techniques to handle hot spots and to isolate performance problems, and get a glimpse of real-world data on Zanzibar’s latency and availability.